Touch is a crucial sensor modality for both humans and robots, as it allows us to directly sense object properties and interactions with the environment. Recently, touch sensing has become more prevalent in robotic systems, thanks to the increased accessibility of inexpensive, reliable, and high-resolution tactile sensors and skins. Just as the widespread availability of digital cameras accelerated the development of computer vision, we believe that we are rapidly approaching a new field of computational science dedicated to touch processing.

However, a key question is now becoming critically important as the field gradually transitions from hardware development to real-world applications: How do we make sense of touch? While the output of modern high-resolution tactile sensors and skins share similarities with computer vision, touch presents challenges unique to its sensing modality. Unlike images, touch information is influenced by temporal components, intrinsically active nature, and very local sensing, where a small subset of a 3D space is sensed on a 2D embedding.

We believe that AI/ML will play a critical role in successfully processing touch as a sensing modality. However, this raises important questions regarding which computational models are best suited to leverage the unique structure of touch, similar to how convolutional neural networks leverage spatial structure in images.

The development and advancement of touch processing will greatly benefit a wide range of fields, including tactile and haptic use cases. For instance, advancements in tactile processing (from the environment to the system) will enable robotic applications in unstructured environments, such as agricultural robotics and telemedicine. Understanding touch will also facilitate providing sensory feedback to amputees through sensorized prostheses and enhance future AR/VR systems.

The goal of this first workshop on touch processing is to seed the foundations of a new computational science dedicated to the processing and understanding of touch sensing. By bringing together, for the first time ever, experts with diverse backgrounds we hope to start discussing and nurturing this new field of touch processing and pinpoint its scientific challenges in the years to come. In addition, through this workshop, we hope to build awareness and lower the entry bar for AI researchers interested in approaching this new field. We believe this workshop can be beneficial for building a community where researchers can work together at the intersection of touch sensing and AI/ML.

Important dates

- Submission deadline:

02 October 2023 (AOE)(Anywhere on Earth) - Notification:

27 October 2023 (AOE) - Camera ready:

18 November 2023 (AOE) - Workshop: 15 December 2023

Schedule

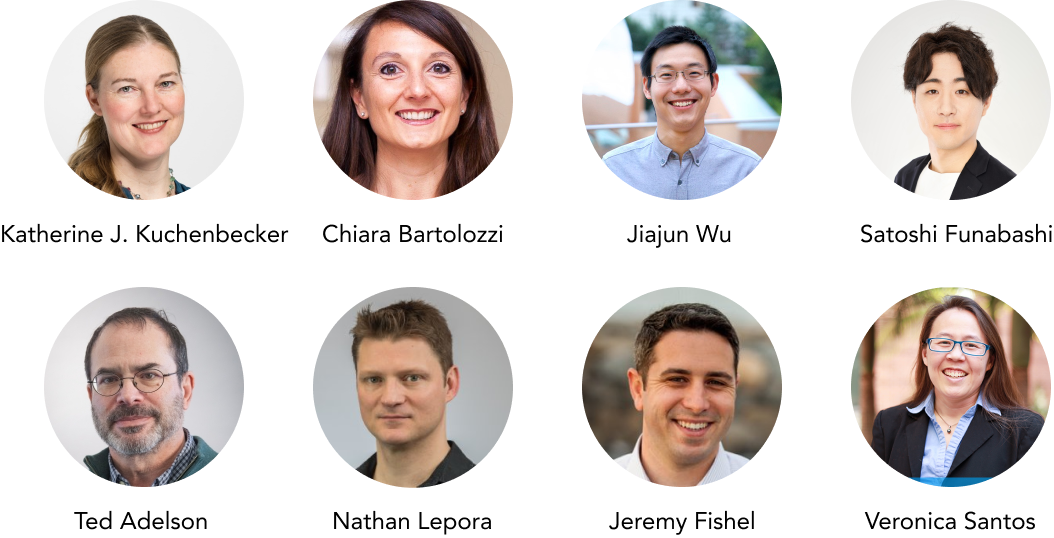

| Time (Local Time, CST) | Title | Speaker |

|---|---|---|

| 08:45 - 09:00 | Opening Remark | Organizers |

| 09:00 - 09:30 | Neuromorphic Touch: from sensing to perception | Chiara Bartolozzi |

| 09:30 - 10:00 | Poster Spotlights | |

| 10:00 - 11:00 | Coffee Break + Poster Session | |

| 11:00 - 11:30 | Multi-Sensory Neural Objects: Modeling, Datasets, and Applications | Jiajun Wu |

| 11:30 - 12:00 | Hand Morphology from Tactile Sensing with Spatial Deep Learning for Dexterous Tasks | Satoshi Funabashi |

| 12:00 - 13:30 | Lunch Break | |

| 13:30 - 14:00 | Building fingers and hands with vision-based tactile sensing | Ted Adelson |

| 14:00 - 14:30 | Progress in real, simulated and sim2real optical tactile sensing | Nathan Lepora |

| 14:30 - 15:00 | Using touch to create human-like intelligence in general-purpose robots | Jeremy Fishel |

| 15:00 - 15:50 | Coffee Break + Poster Session | |

| 15:50 - 16:00 | Award Ceremony | |

| 16:00 - 16:30 | Tactile perception for human-robot systems | Veronica Santos |

| 16:30 - 17:00 | Haptic Intelligence | Katherine J. Kuchenbecker |

| 17:00 - 17:30 | Panel Discussion |

Speakers

Accepted Papers

- Tactile Active Texture Recognition With Vision-Based Tactile Sensors.

Alina Boehm, Tim Schneider, Boris Belousov, Alap Kshirsagar, Lisa Lin, Katja Doerschner, Knut Drewing, Constantin Rothkopf, Jan Peters. - Tactile Sensing for Stable Object Placing.

Luca Lach, Niklas Funk, Georgia Chalvatzaki, Robert Haschke, Jan Peters, Helge Ritter. - Towards Transferring Tactile-based Continuous Force Control Policies from Simulation to Robot.

Luca Lach, Robert Haschke, Davide Tateo, Jan Peters, Helge Ritter, Júlia Borràs, Carme Torras. - An Embodied Biomimetic Model of Tactile Perception.

Luke Burguete, Thom Griffith, Nathan Lepora. - Attention for Robot Touch: Tactile Saliency Prediction for Robust Sim-to-Real Tactile Control.

Yijiong Lin, Mauro Comi, Alex Church, Dandan Zhang, Nathan Lepora. - Bi-Touch: Bimanual Tactile Manipulation with Sim-to-Real Deep Reinforcement Learning.

Yijiong Lin, Alex Church, Max Yang, Haoran Li, John Lloyd, Dandan Zhang, Nathan Lepora. - Robot Synesthesia: In-Hand Manipulation with Visuotactile Sensing.

Ying Yuan, Haichuan Che, Yuzhe Qin, Binghao Huang, Zhao-Heng Yin, Kangwon Lee, Yi Wu, Soo-chul Lim, Xiaolong Wang. - TouchSDF: A DeepSDF Approach for 3D Shape Reconstruction Using Vision-Based Tactile Sensing.

Mauro Comi, Yijiong Lin, Alex Church, Laurence Aitchison, Nathan Lepora. - Touch Insight Acquisition: Human’s Insertion Strategies Learned by Multi-Modal Tactile Feedback.

Kelin Yu, Yunhai Han, Matthew Zhu, Ye Zhao. - Blind Robotic Grasp Stability Estimation Based on Tactile Measurements and Natural Language Prompts.

Jan-Malte Giannikos, Oliver Kroemer, David Leins, Alexandra Moringen. - ViHOPE: Visuotactile In-Hand Object 6D Pose Estimation with Shape Completion.

Hongyu Li, Snehal Dikhale, Soshi Iba, Nawid Jamali. - Curved Tactile Sensor Simulation with Hydroelastic Contacts in MuJoCo.

Florian Patzelt, David Leins, Robert Haschke.

Organizers

- Roberto Calandra (TU Dresden, Germany)

- Haozhi Qi (UC Berkeley, United States)

- Perla Maiolino (University of Oxford, United Kingdom)

- Mike Lambeta (Meta AI, United States)

- Jitendra Malik (UC Berkeley, United States)

- Yasemin Bekiroglu (Chalmers / University College London, United Kingdom)

Call for Papers

We welcome submissions focused on all aspects of touch processing, including but not limited to the following topics:

- Computational approaches to process touch data.

- Learning representations from touch and/or multimodal data.

- Tools and libraries that can lower the barrier of touch sensing research.

- Collection of large-scale tactile datasets.

- Applications of touch processing

We encourage relevant works at all stages of maturity, ranging from initial exploratory results to polished full papers. Accepted papers will be presented in the form of posters, with outstanding papers being selected for spotlight talks.

CeTI is generously sponsoring a best paper award and a best poster award with a prize of 100 Euro each. The prizes will be awarded during the workshop.

Contacts

For questions related to the workshop, please email to workshop@touchprocessing.org.